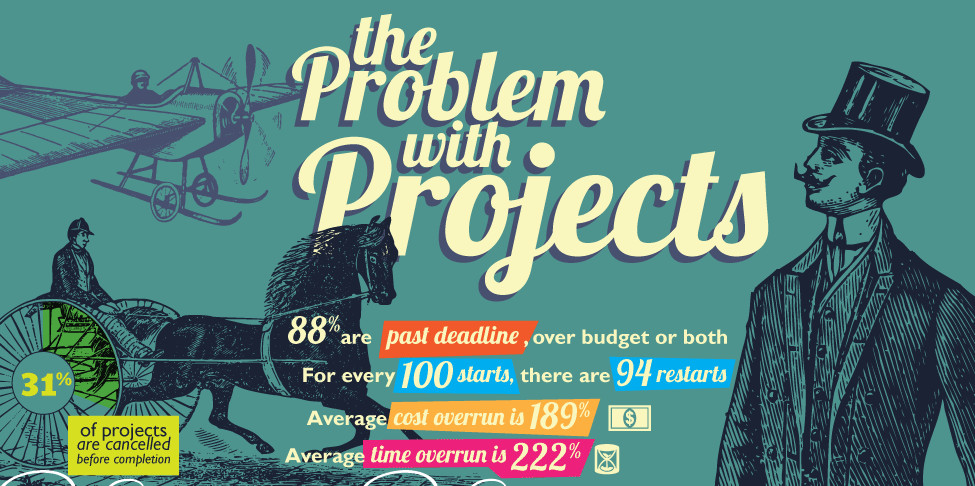

Many books have been written about IT project disasters, and many studies show the appallingly high rate of failure of projects even today, but how do you spot a project that is going to be a disaster before it turns into one.

With over 30 years’ experience of rescuing and resolving I.T./Digital disasters of one type or another, I think there are several key indicators which taken together, strongly point to an project problem , the prelude to an I.T. disaster. The intent here is to help business sponsors spot these indicators, and therefore have the potential to manage the issues before they escalate into a full-blown disaster.

Most readers will be aware of the three governing dynamics of projects, Time, Cost, and Scope, I have indicators for these, and for a fourth, Commitment. Taken together they can be used as a reference point, perhaps to call a timeout, and fix issues before they become crises.

The 25% Combo, Time & Cost.

Let’s look at the combination of time and cost. When combined they can be looked at as projected overrun, let’s take an example: You are starting a complex IT project with 20% contingency, where the 20% can be consumed in time, (late delivery), or budget ($ overspend), or a mix of the two.

Later, this combined 20% is projected to be consumed, with a 15% cost overrun, but also a 6% time delay. You then have a problem that needs dealing with, as it is likely to grow. in our problem project quiz we have set the overrun bar at 25% to give a little margin of error.

My rationale is that if the project spends its 20% contingency but comes in on time, that’s a success, likewise if it goes 20% beyond its dates but remains within budget, it’s a success, and finally, if it is 10% over on both, that’s a win. If the expected overrun is significantly beyond this, you have a problem.

The scale of the problem is defined by the distance to the finish line when the contingency is expected to be consumed.

If the project is verifiably close to finishing but strays outside the projected overrun limit, then the situation is a little painful, but a sprint finish will probably get you over the line. If the project is only 50% complete and is now projected to cross the projected overrun limit line, you have a major problem.

Scope – complexity and expected quality

The scope consists of 2 principle elements, complexity (or breadth) and quality. Complexity should be defined as early as possible in a project as a multi-section prioritized list with various levels, ranging from the minimum viable product (MVP = Business Critical = “must have” etc), through to the perceived ultimate requirement (with lower priority items listed where known). The success of the project depends on delivering to the MVP as quickly as possible. Do not be in a situation where all scope is a high priority, this in its own right will likely cause the project to fail.

The key measure pointing to a potential for crisis in a project is, is the MVP stable or growing? If functions or complexity are being added, are they also being removed to keep the total MVP bucket size the same? If the scope bucket size grows by over 10%, you have a potential for a crisis.

Moving on to Quality, software should work, but more importantly, when it doesn’t, what is the difference between the fault raise rate and the fix rate, is it trending towards being negative (more defects fixed than found) then the project is making progress. In large projects, this trend may take a little time to show itself at the start of testing, but it will not take long. Secondly, what is the defect re-raise rate, how many defects reappear or were never fixed despite being “cleared”. In my experience, if this rate is over 3-5% you have a quality problem and a potential for crisis.

Finally, commitment for all parties

Are the sponsor, customer, supplier, project manager, and the team committed to the success of the project? Taking each in turn:

Sponsor / Customer: Do you really need this project to be successful? Are you holding regular, real reviews of the project, and are you clearing escalated issues in a timely manner? Have you allocated your resources to the project (despite the fact that they all have “real” full-time jobs?)

Supplier: Is your team motivated to the client’s success, appropriately skilled, and sized to be successful, are you reviewing this on an ongoing basis?

Project Manager: Are you committed to delivering this project, regardless of the problems that may arise, are you prepared to manage the project, not just measure it? Are you ready to take advantage of opportunities to go faster, (because some things will slow you down, and it’s your job to figure out how to compensate)

Team: Have the client and supplier teams bonded, and are they successfully working together, achieving the common goal of completing the project.

The absence of any of these facets of commitment is likely to push the project into crisis, which will ultimately surface in vendor friction and time, budget and scope issues.

In Conclusion, IT projects are hard, and it’s easy to get them badly wrong. The key to avoiding a disaster is to understand when they are starting to go off the rails. That is the time to face the potential problem before it turns into a disaster, these simple measures can be used by non-technical executives to get a real idea of where their projects are.